Landscape Research Report: Difference between revisions

mNo edit summary |

mNo edit summary |

||

| Line 22: | Line 22: | ||

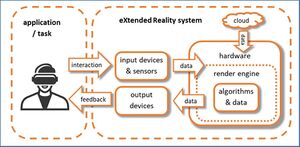

In Figure 2, a simplified schematic diagram of an eXtended Reality system is presented. On the left hand side, the user is performing a task by using an XR application. In section 5, a complete overview of all the relevant domains is given covering advertisement, cultural heritage, education and training, industry 4.0, health and medicine, security, journalism, social VR and tourism. The user interacts with the scene and his interaction is captured with a range of input devices and sensors, which can be visual, audio, motion, and many more (see sec 4.1 and 4.2.). The acquired data serves as input for the XR hardware where further necessary processing in the render engine is performed (see sec. 4.7). For example, the correct view point is rendered or the desired interaction with the scene is triggered. In sec. 4.3 and 4.4, an overview of the major algorithms and approaches is given. However, not only captured data is used in the render engine, but also additional data that comes from other sources such as edge cloud servers (see sec. 4.8) or 3D data available on the device itself. The rendered scene is then fed back to the user to allow him sensing the scene. This is achieved by various means such as XR headsets or other types of displays and other sensorial stimuli. | In Figure 2, a simplified schematic diagram of an eXtended Reality system is presented. On the left hand side, the user is performing a task by using an XR application. In section 5, a complete overview of all the relevant domains is given covering advertisement, cultural heritage, education and training, industry 4.0, health and medicine, security, journalism, social VR and tourism. The user interacts with the scene and his interaction is captured with a range of input devices and sensors, which can be visual, audio, motion, and many more (see sec 4.1 and 4.2.). The acquired data serves as input for the XR hardware where further necessary processing in the render engine is performed (see sec. 4.7). For example, the correct view point is rendered or the desired interaction with the scene is triggered. In sec. 4.3 and 4.4, an overview of the major algorithms and approaches is given. However, not only captured data is used in the render engine, but also additional data that comes from other sources such as edge cloud servers (see sec. 4.8) or 3D data available on the device itself. The rendered scene is then fed back to the user to allow him sensing the scene. This is achieved by various means such as XR headsets or other types of displays and other sensorial stimuli. | ||

The complete set of technologies and applications will be described in the following chapters. | The complete set of technologies and applications will be described in the following chapters. | ||

[[File: | [[File:XR System v1.0.jpg|alt=|center|thumb|XR System]] | ||

== Notes == | == Notes == | ||

Revision as of 17:37, 20 April 2021

Introduction

This report provides a thorough analysis of the landscape of immersive interactive XR technologies carried out in the time period July 2019 until November 2020 by the members of the XR4ALL consortium. It is based on the expertise and contribution by a large number of researchers from Fraunhofer HHI, B<>com and Image & 3D Europe. For some sections, additional experts outside the consortium were invited to contribute.

The document is organised as follows. In Sec. 2, the scope of eXtended Reality (XR) is defined setting clear definitions of fundamental terms in this domain. A detailed market analysis is presented in Sec. 3. It consists of the development and forecast of XR technologies based on an in-depth analysis of most recent surveys and reports from various market analysts and consulting firms. The major application domains are derived from these reports. Furthermore, the investments and expected shipment of devices are reported. Based on the latest analysis by the Venture Reality Fund, the main players and sectors in VR & AR are laid out. The Venture Reality fund is an investment company looking at technology domains ranging from artificial intelligence, augmented reality, to virtual reality to power the future of computing. A complete overview of international, European and regional associations in XR and a most recent patent overview concludes this section.

In Sec. 4, a complete and detailed overview is given on all the relevant technologies that are necessary for the successful development of future immersive and interactive technologies. Latest research results and the current state-of-the-art are described with a comprehensive list of references.

The major application domains in XR are presented in Sec. 5. Several up-to-date examples are given in order to demonstrate the capabilities of this technology.

In Sec. 6, the relevant standards and the current state is described. Finally, in Sec. 7, a detailed overview of EC projects is given that were or are still active in the domain of XR technologies. The projects are clustered in different application domains, which demonstrate the widespread applicability of immersive and interactive technologies.

The scope of eXtended Reality

Paul Milgram defined the well-known Reality-Virtuality Continuum in 1994 [1]. It explains the transition between reality on the one hand, and a complete digital or computer-generated environment on the other hand. However, from a technology point of view, a new umbrella term has been introduced, named eXtended Reality (XR). It is the umbrella term used for Virtual Reality (VR), Augmented Reality (AR), and Mixed Reality (MR), as well as all future realities such technologies might bring. XR covers the full spectrum of real and virtual environments. In Figure 1, the Reality-Virtuality Continuum is extended by the new umbrella term. As seen in the figure, a less-known term is presented, called Augmented Virtuality. This term relates to an approach, where the reality, e.g. the user’s hand, appears in the virtual world, which is usually referred to as Mixed Reality.

Following the most common terminology, the three major scenarios of extended reality are defined as follows. Starting from left-to-right, Augmented Reality (AR) consists in augmenting the perception of the real environment with virtual elements by mixing in real-time spatially-registered digital content with the real world [2]. Pokémon Go and Snapchat filters are commonplace examples of this kind of technology used with smartphones or tablets. AR is also widely used in the industry sector, where workers can wear AR glasses to get support during maintenance, or for training. Augmented Virtuality (AV) consists in augmenting the perception of a virtual environment with real elements. These elements of the real world are generally captured in real-time and injected into the virtual environment. The capture of the user’s body that is injected into the virtual environment is a well-known example of AV aimed at improving the feeling of embodiment. Virtual Reality (VR) applications use headsets to fully immerse users in a computer-simulated reality. These headsets generate realistic images and sounds, engaging two senses to create an interactive virtual world. Mixed Reality (MR) includes both AR and AV. It blends real and virtual worlds to create complex environments, where physical and digital elements can interact in real-time. It is defined as a continuum between the real and the virtual environments but excludes both of them. An important question to answer is how broad the term eXtented Reality (XR) spans across technologies and application domains. XR could be considered as a fusion of AR, AV, and VR technologies, but in fact it involves many more technology domains. The necessary domains range from sensing the world (such as image, video, sound, haptic), processing the data and rendering. Besides, hardware is involved to sense, capture, track, register, display, and to do many more things. In Figure 2, a simplified schematic diagram of an eXtended Reality system is presented. On the left hand side, the user is performing a task by using an XR application. In section 5, a complete overview of all the relevant domains is given covering advertisement, cultural heritage, education and training, industry 4.0, health and medicine, security, journalism, social VR and tourism. The user interacts with the scene and his interaction is captured with a range of input devices and sensors, which can be visual, audio, motion, and many more (see sec 4.1 and 4.2.). The acquired data serves as input for the XR hardware where further necessary processing in the render engine is performed (see sec. 4.7). For example, the correct view point is rendered or the desired interaction with the scene is triggered. In sec. 4.3 and 4.4, an overview of the major algorithms and approaches is given. However, not only captured data is used in the render engine, but also additional data that comes from other sources such as edge cloud servers (see sec. 4.8) or 3D data available on the device itself. The rendered scene is then fed back to the user to allow him sensing the scene. This is achieved by various means such as XR headsets or other types of displays and other sensorial stimuli. The complete set of technologies and applications will be described in the following chapters.

Notes

- ↑ [1] P. Milgram, H. Takemura, A. Utsumi, and F. Kishino, "Augmented Reality: A class of displays on the reality-virtuality continuum", Proc. SPIE vol. 2351, Telemanipulator and Telepresence Technologies, pp. 2351–34, 1994.

- ↑ [2] Ronald T. Azuma, “A Survey of Augmented Reality”, Presence: Teleoperators and Virtual Environments, vol. 6, issue 4, pp. 355-385, 199

XR market watch

Market development and forecast

Market research experts all agree on the tremendous growth potential for the XR market. The global AR and VR market by device, offering, application, and vertical, was valued at around USD 26.7 billion in 2018 by Zion Market Research. According to the report issued in February 2019, the global market is expected to reach approximately USD 814.7 billion by 2025, at a compound annual growth rate (CAGR) of 63.01% between 2019 and 2025 [3]. With over 65% in a forecast period from 2019 to 2024, similar annual growth rates are expected by Mordorintelligence [4]. It is assumed that the convergence of smartphones, mobile VR headsets, and AR glasses into a single XR wearable could replace all the other screens, ranging from mobile devices to smart TV screens. Mobile XR has the potential to become one of the world’s most ubiquitous and disruptive computing platforms. Forecasts by MarketsandMarkets [5][6] individually expect the AR and VR markets by offering, device type, application, and geography, to reach USD 72.7 billion by 2024 (AR, valued at USD 10.7 billion in 2019) and USD 20.9 billion (VR, valued at USD 6.1 billion in 2020) by 2025. Gartner and Credit Suisse [7][8] predict significant market growth for VR & AR hardware and software due to promising opportunities across sectors up to 600-700 billion USD in 2025 (see Figure 3). With 762 million users owning an AR-compatible smartphone in July 2018, the AR consumer segment is expected to grow substantially, also fostered by AR development platforms such as ARKit (Apple) and ARCore (Google).